Artificial Intelligence

iStock

Tools That Reduce Risk in AI-Driven Workflows

A practical guide to reducing risk in AI-driven workflows using detection, fact-checking, and content verification tools combined with human oversight.

A practical guide to reducing risk in AI-driven workflows using detection, fact-checking, and content verification tools combined with human oversight.

Every team using AI to produce content or customer-facing material is carrying a risk they probably have not fully mapped. A blog post goes live with a paragraph that reads as if it were generated rather than written. A client proposal includes a hallucinated statistic. An internal report circulates with phrasing lifted from a training dataset nobody checked. These are not edge cases. They are on Tuesday.

The companies getting burned are not the ones using AI. They are the ones using it without a safety layer. The gap between what the model produces and what actually gets published is where the risk lives. What follows is a breakdown of the specific tools that close that gap, organized by the type of risk each one addresses.

Most AI risk conversations focus on the big existential questions. In practice, the risks that actually cost businesses money are far more mundane. Content that triggers a plagiarism flag on a client’s end. Marketing copy identical to three competitors because everyone used the same model. Legal language was generated confidently but factually wrong. Customer emails that sound robotic enough to damage the brand. These are not failures of the AI. They are failures of the process. And the cost compounds. One bad paragraph is forgettable. A pattern of unverified output erodes trust and eventually lands on someone’s desk as a formal complaint.

No single tool covers every risk. The strongest workflows layer multiple tools, each catching a different type of problem. Here is an honest breakdown of what is available and what each one actually does.

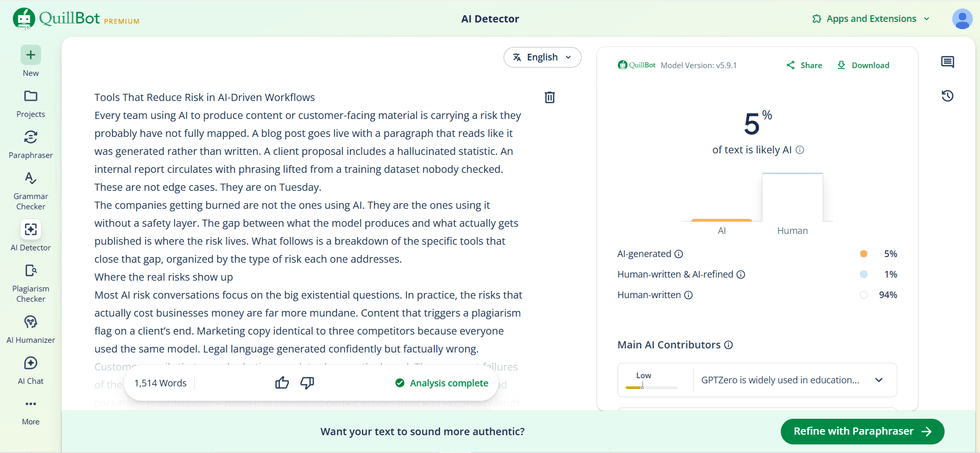

QuillBot’s AI Detector scans text and identifies which sections show patterns consistent with machine generation. It covers major language models, including GPT-4, GPT-5, Claude, and Gemini, and provides passage-level flagging rather than a single pass-or-fail score. It is particularly useful for distinguishing between content that was fully AI-generated and content that was human-written but polished with AI editing tools. That distinction is what reduces false positives. For content teams, running a piece through this before publication is the equivalent of a spell check: you do not run it because you expect problems; you run it because catching something before it ships is always cheaper than catching it after.

Originality. AI takes a similar approach but adds a broader suite that includes plagiarism checking and readability scoring alongside its AI detection. It is popular with agencies and publishers who want a single dashboard that covers multiple quality dimensions, rather than switching between tools.

GPTZero is widely used in education and academic publishing. Its strength is granular sentence-level analysis, which makes it well-suited for reviewing student work or academic manuscripts where identifying exactly which passages were AI-generated matters more than a document-level score.

Sapling AI Detection is built for enterprise teams. It integrates via API with existing content management systems and editorial workflows, making it a strong fit for organizations that need detection at scale without requiring writers to use a separate tool.

Perplexity AI operates as an AI-powered search engine that cites its sources. In citation mode, it traces every claim back to a specific source, which makes it useful for verifying statistics, quotations, and factual claims that a language model might have fabricated. It does not replace deep primary-source research, but it is the fastest way to catch obvious hallucinations before they reach the audience.

Google Scholar remains the most reliable free tool for verifying academic claims, research findings, and data points. When AI-generated content cites a study, Google Scholar is the first place to confirm that the study exists and that the data matches what is being reported.

Consensus AI searches through peer-reviewed scientific papers and synthesizes findings on specific questions. For teams producing health, science, or policy content where factual accuracy is legally or reputationally critical, Consensus provides an additional layer of verification beyond general-purpose search.

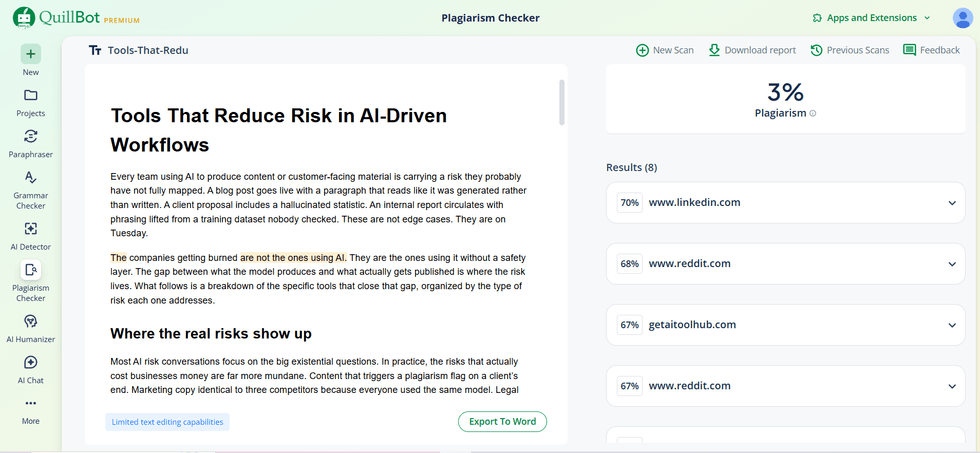

Copyscape is the industry standard for checking the originality of web content. It compares text against indexed web pages and flags passages that match existing published material. For agencies delivering client work, running a final Copyscape check beforehand has been standard practice for years, and it is even more important now that AI can produce text that inadvertently mirrors its training data.

Turnitin is the dominant tool in academic and educational contexts. It checks submissions against an enormous database of academic papers, student work, and web content. Institutions that already use Turnitin for plagiarism detection are increasingly relying on its AI detection module as well, making it a two-in-one solution for educational publishers.

Grammarly’s originality checker is integrated into the same writing assistant that many teams already use for grammar and style. It flags passages that overlap with online sources and provides links to the matching content. For teams that already pay for Grammarly Business, it adds an originality layer without requiring a separate tool.

Writer is purpose-built for enterprise content teams. It allows organizations to define their brand voice, terminology, and style rules, then checks every piece of content against those standards before publication. For teams where five different people use AI to draft content and everything needs to sound like it came from the same brand, Writer is the tool that enforces that consistency.

Acrolinx takes a similar approach but is aimed at larger enterprises with complex governance requirements. It integrates with content management systems, authoring tools, and translation platforms, making it suitable for multinational organizations where brand voice needs to hold across multiple languages and markets.

Grammarly Business covers the most common ground. Its tone detector and style suggestions are not as deeply customizable as Writer or Acrolinx, but for small to mid-sized teams that need a reliable baseline for tone and clarity, it handles the job at a fraction of the cost.

Every tool on this list reduces risk. None eliminates it. The final layer in any AI-driven workflow that takes quality seriously is a human being who reads the output with fresh eyes and asks what software cannot: does this make sense in context? Would we say this to a client’s face? Is this the best version or just the fastest? The best teams treat human review as the stage where AI output becomes company output. The reviewer adds the insider detail, cuts the paragraph that adds nothing, and rephrases the sentence that sounds like a prompt. That is where AI content stops being generic and starts being yours.

The strongest content operations run these tools in sequence, not in isolation. The writer drafts with AI assistance. The output passes through an AI detector to flag machine-patterned sections. A plagiarism checker confirms originality. A fact-checking step verifies any claims or data. A brand voice tool ensures tonal consistency. And a human reviewer makes the final call. That entire pipeline adds ten to fifteen minutes per piece. Compare that to the cost of publishing something that damages credibility, loses a client, or triggers a formal complaint, and the math writes itself.

No individual tool solves the AI quality problem. What works is a layered approach where each tool catches a category of risk the others miss. Detection for machine patterns. Fact-checking for hallucinations. Plagiarism scanning for originality overlap. Voice tools for brand consistency. Human review for the judgment calls. The teams building this system now are not slowing down their AI workflows. They are making them sustainable. The ones skipping these steps are accumulating a debt that will come due at the worst possible moment.

Three to four cover the critical bases for most teams: an AI detector like QuillBot’s, a plagiarism checker like Copyscape or Grammarly, a brand voice tool, and a fact-checking process. The goal is to cover distinct risk categories, not build a bloated stack. If two tools are flagging the same problems and nothing else, one is redundant.

Less than you would expect. Detection and plagiarism scans take under a minute each. Brand voice checks happen in real time. Human review, the longest step, should happen regardless. The total added time is roughly ten to fifteen minutes per piece, which is negligible compared to the reputational cost of publishing unverified AI output.

Yes. First drafts are where AI patterns, hallucinated facts, and issues with originality are most likely to appear. Verification tools serve as a safety net on days when the editor is rushed or when content passes through fewer review stages than usual. The purpose is not to question the team’s ability. It is to protect the output when time pressure means the usual standards might slip.

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.