Self Driving Cars

Mobileye / Intel

Watch Mobileye's autonomous car push through traffic as assertively as a human

Test vehicles only use cameras for now, but can negotiate the busy and aggressive streets of Jerusalem

Test vehicles only use cameras for now, but can negotiate the busy and aggressive streets of Jerusalem

A concern for autonomous cars is that human drivers will quickly learn how to bully them out of the way, assuming the machine's desire to stay safe will always outweigh its passenger's desire to get to work on time.

But, according to Israel computer vision company Mobileye, autonomous cars will be taught how to be every bit as assertive as humans, when necessary.

The Intel-owned company, which formerly worked with Tesla on the electric car maker's Autopilot driver assistance system, is currently teaching a fleet of autonomous vehicle how to navigate the challenging and sometimes aggressive streets of Jerusalem. If an autonomous car can drive here, Mobileye says, it can drive almost anywhere.

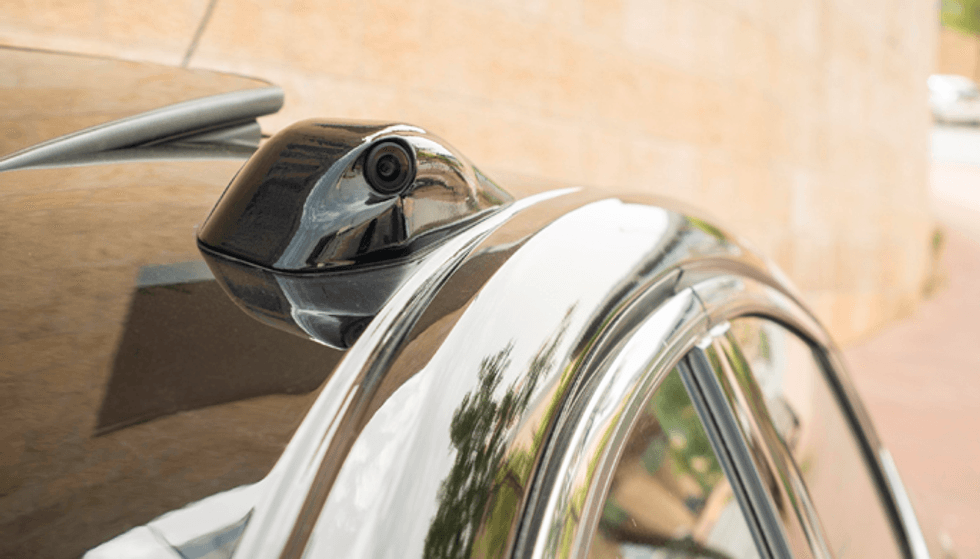

Using its 20 years of experience in computer vision, Mobileye is taking the unusual approach of fitting its test vehicles exclusively with cameras.

Unlike the cars of Waymo, Uber and others, Mobileye is not currently using lidar, radar or any other kind of imaging system; it just uses 12 cameras on each of its 100 cars. Radar and lidar, the company says, will be added to its fleet in the coming weeks as it enters the second stage of development.

Testing on the roads of its own country make obvious logistical sense, but the Israeli capital is also useful to Mobileye in other ways.

Amnon Shashua, co-founder and chairman of Mobileye, said on May 17: "Jerusalem is notorious for aggressive driving. There aren't perfectly marked roads. And there are complicated merges. People don't always use crosswalks. You can't have an autonomous car traveling at an overly cautious speed, congesting traffic or potentially causing an accident. You must drive assertively and make quick decisions like a local driver."

A video published by Mobileye, above, shows how its car will switch lanes to overtake slower vehicles, spotting gaps and taking them in a safe but assertive way. Pushing things a little further, the autonomous car also knows that sitting on the very edge of its lane tells vehicles behind that it wants to move across. Such actions have not been shown publicly by other driverless car companies.

The manners, aggression and thought processes of an autonomous car, known as its driving policy, "makes all other challenging aspects of designing AVs [autonomous vehicles] seem easy," Shashua says, adding that there is a balance to be struck between driving safely and being overly cautious.

Where human drivers instinctively know the difference between assertive and dangerous driving, computers need to be taught by converting these intangible rules of the road into a mathematical formula.

This means splitting the car's intelligence into two layers. The first is the artificial intelligence used to get the car from A to B, based on what it can see through the 12 cameras. The AI's plans - to overtake here, or switch lanes there - are fed to what Mobileye calls the Responsibility-Sensitive Safety layer, which has a mathematical understanding of rules like safe following distances and right of way. If the AI proposes an action that would violate one of the rules, or "common sense principles," the action is rejected by the RSS layer.

Mobileye says it has publicly shared this approach to autonomous vehicle safety, and is looking to collaborate on an industry-led safety standard which can be followed by all autonomous vehicle systems, no matter which manufacturer they belong to.

Shashua explains how Mobileye wants to create a "comprehensive, end-to-end solution" using just cameras — in other words, a vehicle which is fully autonomous without using radar or lidar. Then, the company will create a new end-to-end system using other technologies, like lidar.

This way, Mobileye says it will have created "true redundancy" where the car can navigate autonomously with its camera system functioning, or without. Other companies fuse data together from cameras, radar and lidar, but if one element fails the rest of the system cannot fully take up the slack. Shashua describes such a system, relying on data from multiple sources, as being like "a string of Christmas tree lights where the entire string fails when one bulb burns out."

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.