Apple

What iOS 12 reveals about Apple’s plans for the future of Face ID

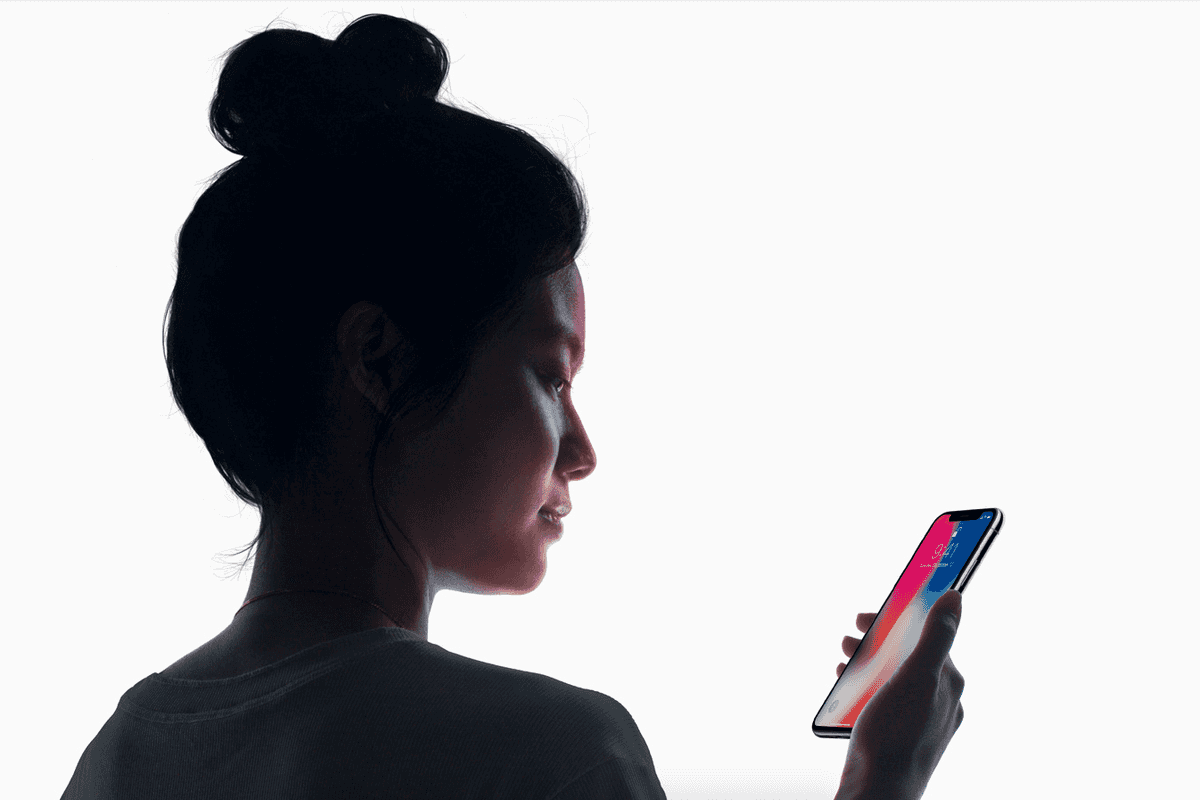

Hidden inside the first beta of iOS 12 are clues about what Apple will do next with Face ID

Hidden inside the first beta of iOS 12 are clues about what Apple will do next with Face ID

Less than 24 hours after Apple announced iOS 12 and made the first beta build available to developers, secrets about future products and features are starting to leak out.

First, it appears that Apple is working on a way to make Face ID work with people who drastically change the way they look on a regular basis. While this doesn't necessarily mean the system will work perfectly with two completely different people enrolled onto it (as Touch ID does with fingerprints), The Verge claims it does indeed work with different faces.

Read More:

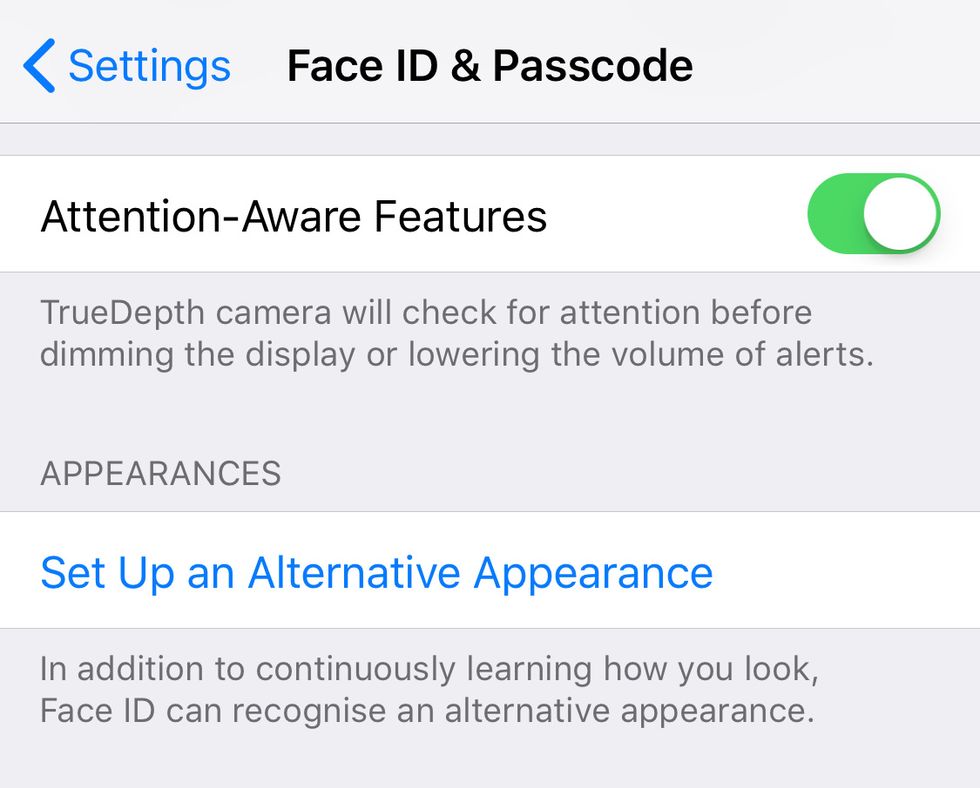

Discovered by several Apple blogs and verified by GearBrain, the new setting is described in the iOS 12 beta as "Alternative Appearance" on the Face ID settings page.

Beneath this, Apple explains: "In addition to continuously learning how you look, Face ID can recognize an alternative appearance."

If the feature works as hoped, this will likely come as good news to hospital staff who wear Face ID-defeating face masks, and other people whose profession requires them to obscure their face or drastically change the way they look on a regular basis.

Teaching Face ID to recognize more than one person could also tie in with another feature discovered in the iOS 12 beta. App developer Guilherme Rambo tweeted to show off a user interface Apple has created for using Face ID on an iPad.

No current iPad offers Face ID, so this discovery suggests Apple is preparing to launch a new model with the identification technology. This lines up with multiple rumors claiming the new iPad Pro will ditch the home button and adopt a screen notch, like that of the iPhone X.

This also fits with Apple's own admission that iOS 12 will bring iPhone X-style gestures to the iPad, where swiping down from the top-right reveals Control Center. Additionally, the iPad's clock has been moved from the center to the left corner, potentially making way for a notch to house the Face ID system and front-facing camera.

If we take things a step further and combine these two pieces of information, we could soon see an iPad which (finally) allows for multiple user accounts, each authenticated by Face ID. This would of course be a boon for families, who could potentially have an iPad which is accessible via Face ID for both parent and child.

The Face ID setup UI is finally working on iPad. Clearly not done yet as can be seen by the descriptions mentioning "iPhone". But it's a start :) pic.twitter.com/PVQgfbne15

— Guilherme Rambo (@_inside) June 5, 2018

Finally, Apple announced this week that iOS 12 now tracks a user's tongue when using the Animoji (and new Memoji) feature. At first, this looks like a simple update which makes the animated emojis more fun (winking is also possible for the first time), but we think there could be more to it.

Apple could potentially use the position of a person's tongue and teeth (as well as the rest of their face) to lip-read. This could be implemented in a form of future accessibility feature in iOS.

This is something Ahmad Hassanat, associate professor of computer science at Mutah University, Jordan, said in his 2011 paper Visual Speech Recognition. On using computers to lip-read, Hassanat said: "There is valuable information encapsulated within the region of interest that has a significant association with the spoken work, eg the appears of the tongue and teeth in the image during speech."

Hassanat added: "Some phonemes like [th] involve the appearance of the tongue...Therefore detecting the tongue in the region of interest reveals something about the uttered phoneme and, by implication, the visual word."

Is there more to the Animoji update than sticking out one's tongue? Is Apple going to use Face ID to lip-read? We have around four months of development time left before iOS 12 launches to the public, so let's wait and see.

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.