Smartphones

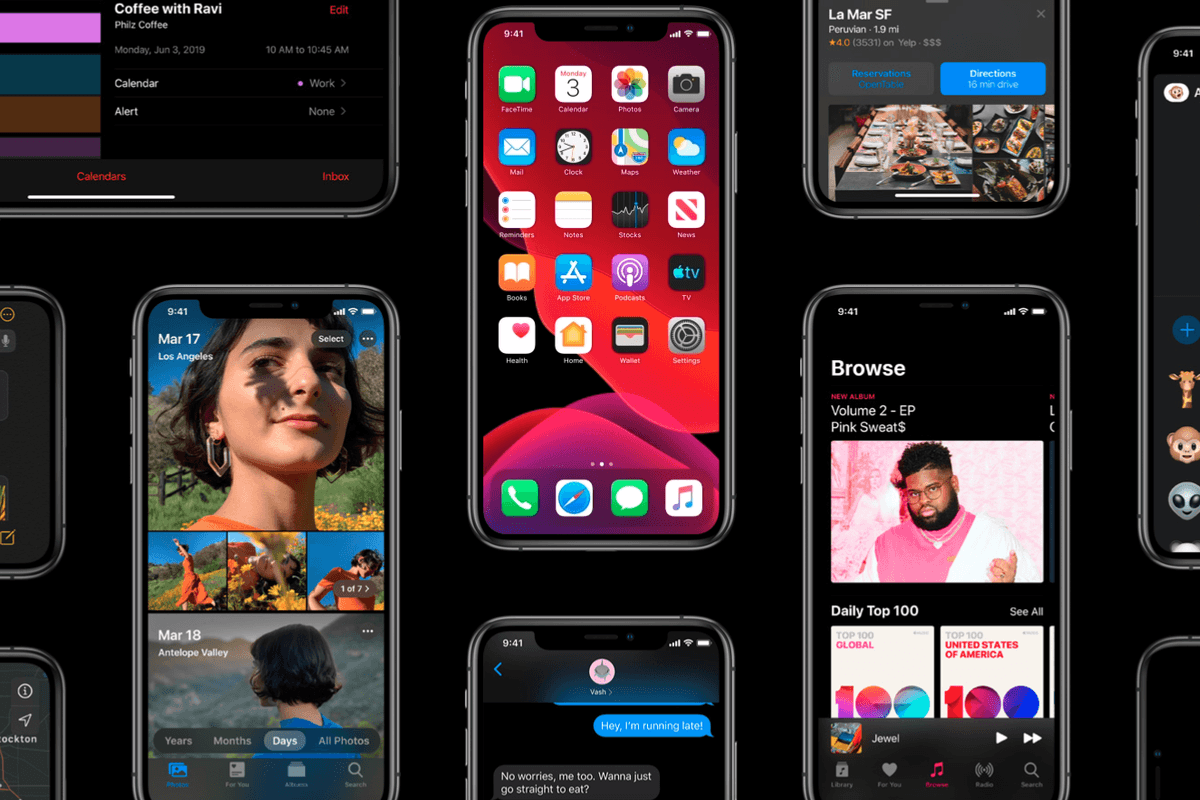

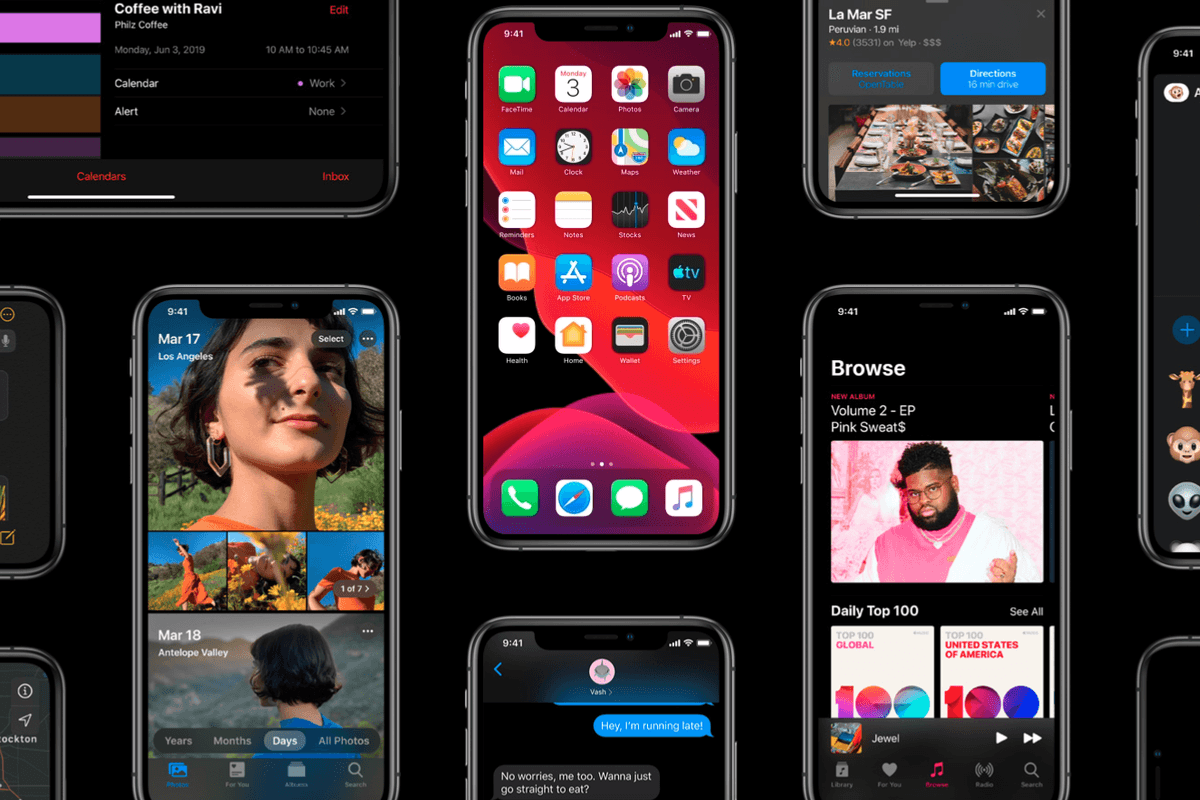

Apple

New iPhone feature digitally moves your eyes toward the camera on FaceTime calls

'Attention Correction' augmented reality feature spotted in latest iOS 13 beta

'Attention Correction' augmented reality feature spotted in latest iOS 13 beta

It may sound like something from a Victorian school, but Attention Correction is instead a new iPhone feature which artificially moves your eyes to make it appear as if you are looking at the camera.

We all know what this feature is trying to correct, the slightly weird view you get on a FaceTime call when the person you are speaking to is looking at the screen instead of the front-facing camera, causing their eyes to look a little off-center, breaking eye contact.

Read More:

With FaceTime Attention Correction in iOS 13, Apple is using the iPhone's augmented reality skills to artificially adjust where your eyes are pointing, moving them so that it appears you are looking directly at the camera - and therefore into the eyes of whoever you're talking to - instead of off-center.

The feature was discovered and revealed on Twitter by Mike Rundle, who posted images of how looking at the screen in the camera app, then looking at the screen while on a FaceTime call in iOS 13. The later image shoes his eyes looking right into the camera, which the iPhone is doing on the fly.

Rundle says the feature only appears on the iPhone XS and XS Max for now. GearBrain is also running iOS 13 beta 3 on our iPhone X and can confirm that Attention Correction is not available on the FaceTime settings page.

This would suggest the feature requires the extra processing power of the latest iPhones to function. However, Apple may find a way to make it work on other models by the time iOS 13 is finished and take out of the beta development stage in the fall. The feature will also surely be present on the new iPhone 11, due at the same time.

How iOS 13 FaceTime Attention Correction works: it simply uses ARKit to grab a depth map/position of your face, and adjusts the eyes accordingly.

Notice the warping of the line across both the eyes and nose. pic.twitter.com/U7PMa4oNGN

— Dave Schukin 🤘 (@schukin) July 3, 2019

Twitter user Dave Schukin also played with the feature and noticed that, when you move a line - in his case, the stem of a pair of glasses - up and down across one's eyes, the straight line warps when it passed the eyes, demonstrating how the feature is working to subtly manipulate the image.

"It simply uses ARKit to grab a depth map/position of your face, and adjusts the eyes accordingly. Notice the warping of the line across both the eyes and nose," Schukin tweeted.

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.