Industry

MIT

Artificial intelligence learns to see humans through walls

MIT-trained computer software can see people using only radio frequencies

MIT-trained computer software can see people using only radio frequencies

Artificial intelligence has been taught to see people through walls, using nothing more than a radio signal 1,000 times weaker than Wi-Fi.

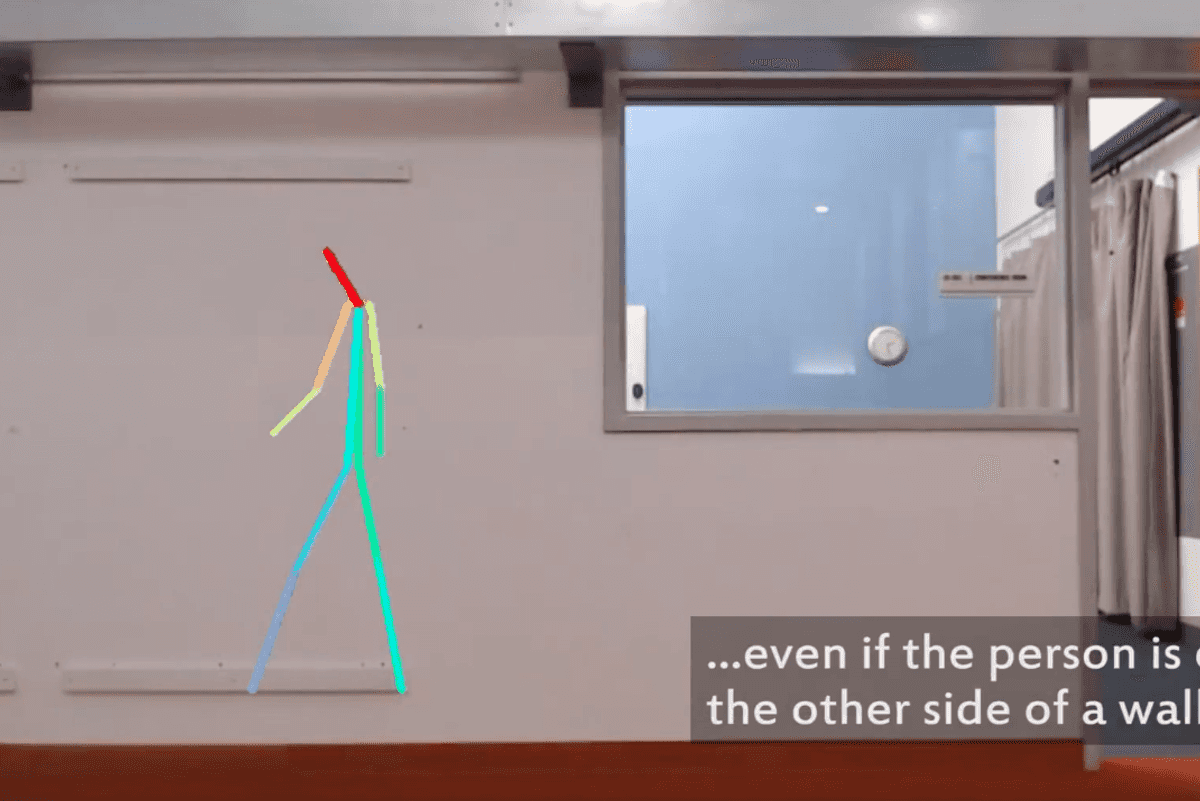

Developed by a team of researchers at the Massachusetts Institute of Technology (MIT), the system can see the basic stick-person outline of human through a wall. It can do so with almost the same accuracy whether the wall is there or not.

Read more about artificial intelligence:

MIT's Computer Science & Artificial Intelligence Lab said: "The researchers use a neural network to analyze radio signals that bounce off people's bodies, and can then create a dynamic stick figure that walks, stops, sits, and moves its limbs as the person performs those actions."

Called RF-Pose, the system works because radio frequencies pass through walls but reflect off humans, revealing their location in a similar way to how radar and lidar systems work.

To train the artificial intelligence, the system was given synchronized wireless and visual inputs. In other words, it could see people through a regular video camera, plus the radio frequency, which bounced off them. That way, the AI could work out what radio frequency patterns matched actions like sitting, standing and walking.

How the system taught itself with these inputs was particularly interesting. MIT explains in a press release: "Since cameras can't see through walls, the network was never explicitly trained on data from the other side of a wall - which is what made it particularly surprising to the MIT team that the network could generalize its knowledge to be able to handle through-wall movements."

Professor Antonio Torralba said: "If you think of the computer vision system as the teacher, this is a truly fascinating example of the student outperforming the teacher."

Once the system had been trained on what to look for, it no longer needed the visual input because it could track people just as clearly using on the radio frequency alone.

A paper on the research reads: "Once trained, the network uses only the wireless signal for pose estimation...the radio-based system is almost as accurate as the vision-based system used to train it. Yet, unlike vision-based pose estimation, the radio-based system can estimate 2D poses through walls despite never trained on such scenarios."

A demonstration of how the system works can be seen in the video embedded above. This also shows how it can track the movements of several people at once, although when they overlap the person in the background disappears.

Naturally, such a system lends itself to surveillance. The researchers say: "Estimating the human pose is an important task in computer vision with applications in surveillance, activity recognition, gaming etc".

Away from this, the researchers explain how the technology could be used to analyze the movements of people with diseases like Parkinson's, multiple sclerosis and muscular dystrophy. The technology could, MIT says, provide "a better understanding of disease progression and allow doctors to adjust medications accordingly, while providing the added security of monitoring for falls, injuries and changes in activity patterns."

Professor Dina Katabi, who co-wrote the MIT paper, said: "We've seen that monitoring patients' walking speed and ability to do basic activities on their own gives healthcare providers a window into their lives that they didn't have before, which could be meaningful for a whole range of diseases. A key advantage of our approach is that patients do not have to wear sensors or remember to charge their devices."

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.