Robotics

MIT CSAIL

This MIT robot learns how to pick up items it has never seen before

MIT's Computer Science and Artificial Intelligence Laboratory is behind the technology

MIT's Computer Science and Artificial Intelligence Laboratory is behind the technology

Anyone who's seen inside a modern car factory, knows how impressive robots look as they pick up items and move them quickly with pinpoint accuracy.

But they can do this because these robots are programmed, repeating movements multiple times rather than thinking for themselves, similar to how the Moley robotic kitchen system duplicates complex recipes, but never comes up with variations of its own.

Read More:

This could soon change, however, as new technology developed by the Computer Science and Artificial Intelligence Laboratory at the Massachusetts Institute of Technology gives robots the freedom to work things out for themselves.

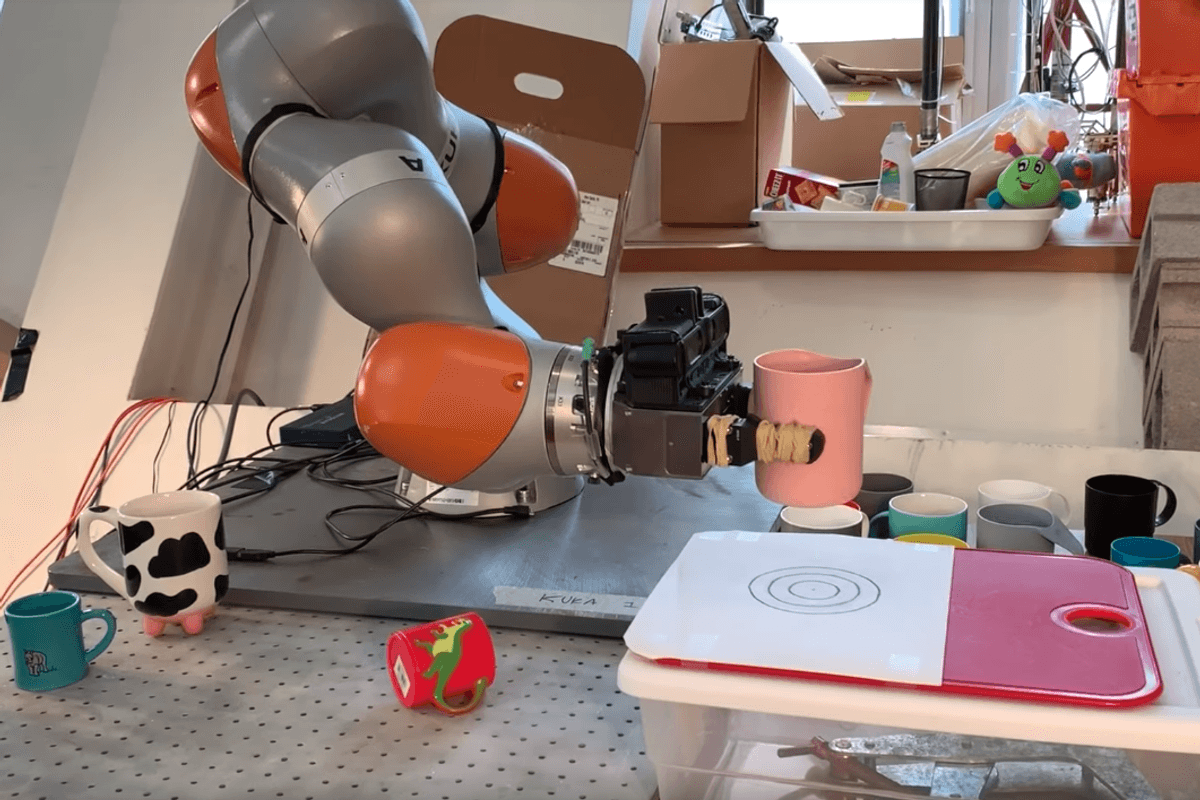

Called kPAM (KeyPoint Affordances for Category-Level Manipulation), the technology allows a robotic arm to learn how to pick up items it's never interacted with or seen.

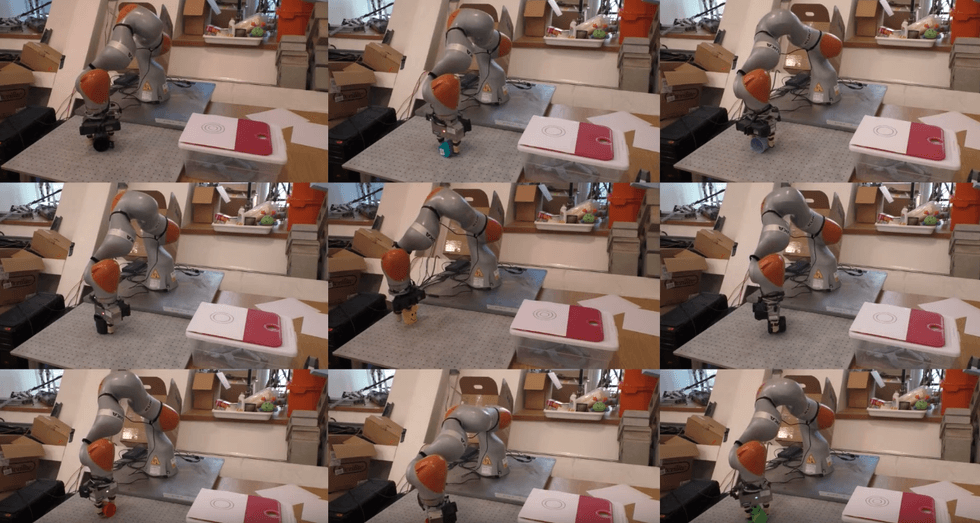

The technology has been shown off with two categories of items; mugs and shoes. Once the machine knows how to pick up a single mug and shoe — then place it at the desired location, which may also involve turning the item around while moving — it can pick up any type of mug or shoe, no matter the size and style.

Once the robotic arm recognizes the generic shape of a shoe, for example, it knows to grab the shoe by the back of the ankle before place the footwear on a shelf. Similarly, after identifying a mug, the robot knows to lift the cup by the handle, and then move it to the target location. The size of the shoe or design and shape of the mug and its handle do not matter; the AI can still work out what to do.

The emphasis here is on putting items down as much as picking them up. If a robot is strong enough, and can open its grabbing hand wide enough, it can pick up a wide range of objects. But putting it down in a meaningful way — hooking the mug on a stand by its handle, or placing shoes neatly on a rack — is more difficult, and what researchers at MIT have achieved with this technology.

The robot does this by identifying 'key points' on the object. For mugs it just needs three — the side, bottom and handle of a mug. Once it understands how to identify these three key points, it can do so on any mug with a handle, and interact with it correctly, even if it is lying on its side.

Shoes are more complex, with six key points needing to be identified. But once the robot has done that, it can pick up and successfully move, then neatly put down, over 20 different types of footwear, from slippers to boots.

A paper detailing the technology, written by Lucas Manuello, Wei Gao, Peter Florence and Russ Tedrake, states: "Extensive hardware experiments demonstrate our method can reliably accomplish tasks with never-before seen objects in a category, such as placing shoes and mugs with significant shape variation."

The technology could eventually be put to work in factories, with robots, for example, accurately picking up and moving vehicle tires or wheels, no matter its design or exact dimensions.

GearBrain meets Intuition Robotics' ElliQwww.youtube.com

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.