Self Driving Cars

Tesla

Tesla with Autopilot tricked to drive with no one behind the wheel

The experiment was conducted after a Tesla crashed seemingly with no one behind the wheel

The experiment was conducted after a Tesla crashed seemingly with no one behind the wheel

An experiment conducted by Consumer Reports has found that a Tesla's Autopilot system could be tricked into operating the car with no one in the driver's seat.

The research into Autopilot's safety systems took place in the wake of a fatal accident in Texas, where a Tesla Model S was found to have crashed with no one behind the wheel. Two men, one sat in the front passenger seat and one sat in the back, lost their lives in the single-vehicle incident.

Read More:

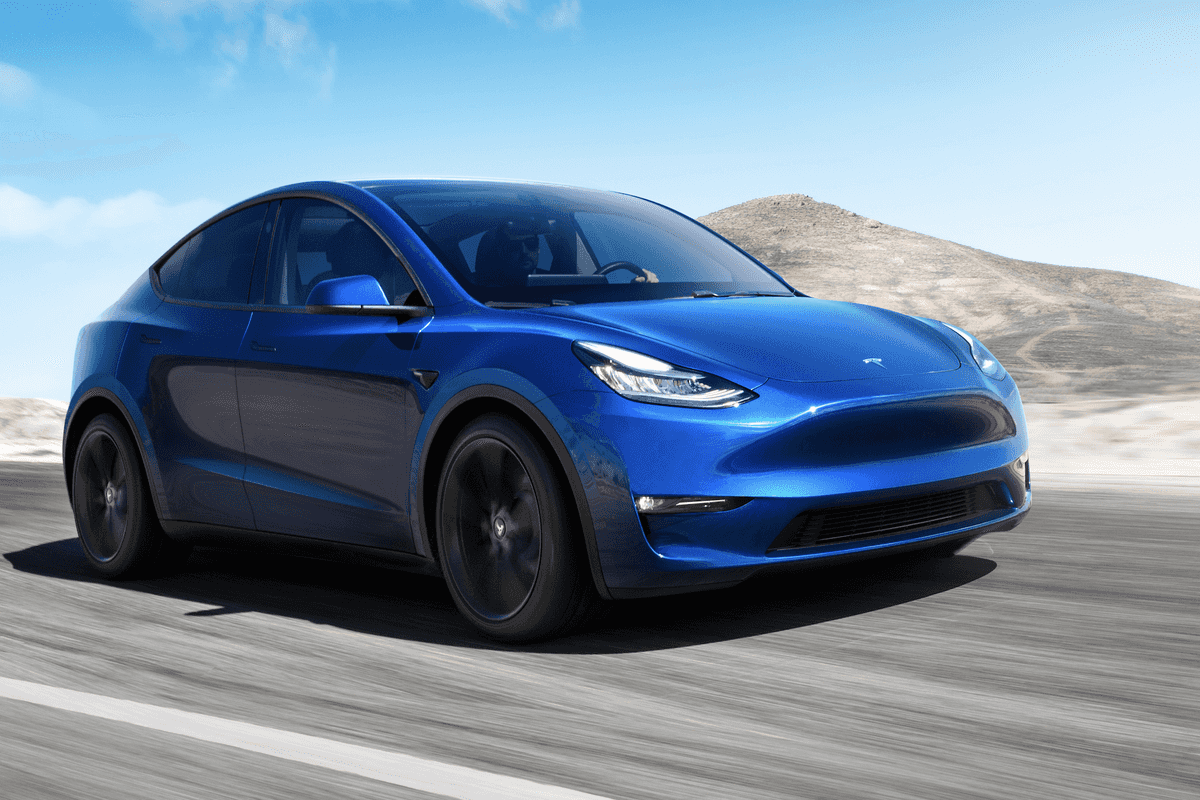

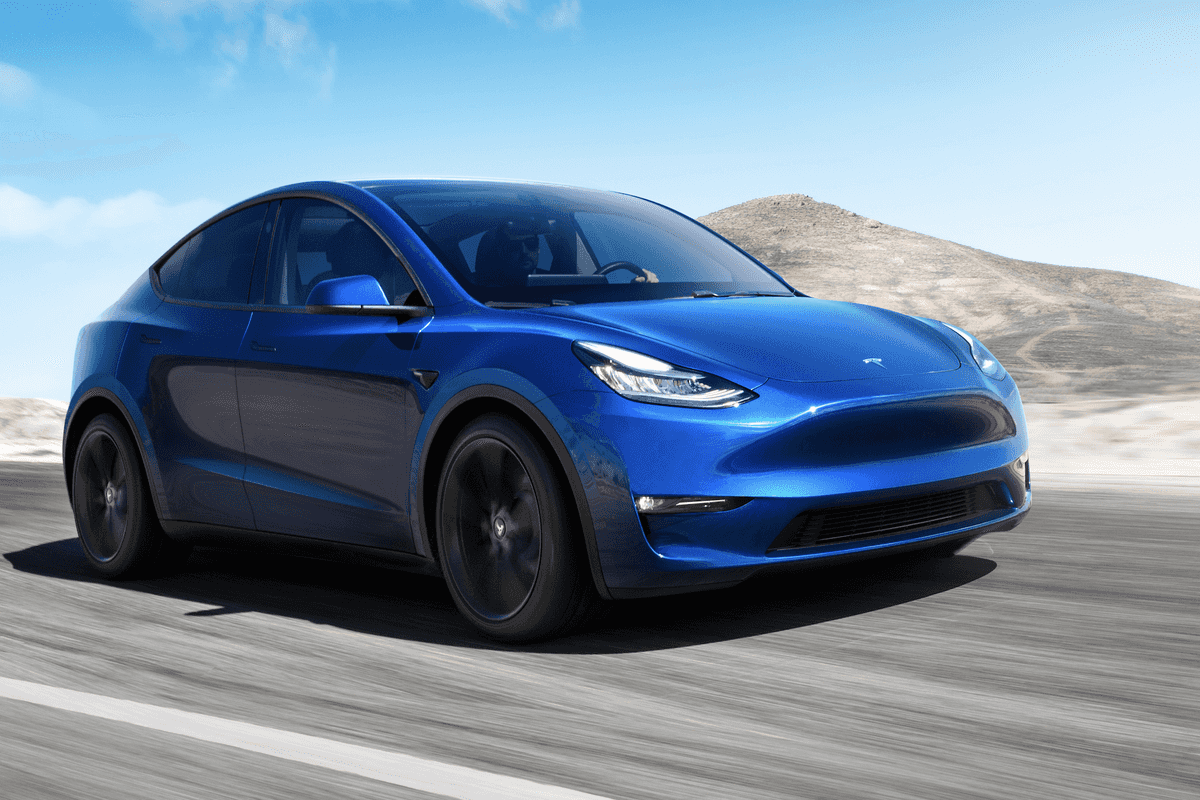

Over several trips across a closed, half-mile test track, Consumer Reports researchers found their Tesla Model Y would continue to follow white lines using its Autopilot system, despite there being no one sat behind the wheel. The vehicle is also said to have issued no warnings, or indicate that the driver's seat was empty.

Jake Fisher, Consumer Reports' senior director of auto testing, said: "In our evaluation, the system not only failed to make sure the driver was paying attention, but it also couldn't tell if there was a driver there at all. Tesla is falling behind other automakers like GM and Ford that, on models with advanced driver assist systems, use technology to make sure the driver is looking at the road."

Tesla boss Elon Musk responded to the crash on Twitter, before the Consumer Reports experiment, saying that Tesla's investigation so far showed that Autopilot was not enabled and that the vehicle in question wasn't fitted with the more advanced Full Self-Driving Autopilot option, known as FSD.

Musk said: "Data logs recovered so far show Autopilot was not enabled and this car did not purchase FSD. Moreover, standard Autopilot would require lane lines to turn on, which this street did not have."

GearBrain would like to make clear that, under no circumstances, should anyone try and replicate Consumer Reports' careful experiment with their Tesla.

Consumer Reports notes how its tester sat on top of a buckled driver's seat belt, as Autopilot will disengage if it is unbuckled while the vehicle is in motion. The tester then set off, engaged Autopilot, then brought the car to a stop using the scroll wheel on the steering wheel used to adjust Autopilot speed. He then placed a small weighted chain on the steering wheel to simulate the weight of a driver's hand, then moved to the front passenger seat and increased the Autopilot speed to make the Tesla set off again.

Jake Fisher, who conducted the experiment, said: "The car drove up and down the half-mile lane of our track, repeatedly, never noting that no one was in the driver's seat, never noting that there was no one touching the steering wheel, never noting there was no weight on the seat. It was a bit frightening when we realized how easy it was to defeat the safeguards, which we proved were clearly insufficient."

Consumer Reports says that such an experiment would not be possible in a GM vehicle using the company's Super Cruise driver assistance system, as that has a camera facing the driver to check they are present and paying attention.

Fisher added: "Let me be clear: Anyone who uses Autopilot on the road without someone in the driver seat is putting themselves and others in imminent danger."

Tesla is yet to comment further on the fatal crash and the findings of Consumer Reports.

On April 22, two Democratic Senators wrote a letter to the US National Highway Traffic Safety Administration, asking it to investigate the fatal accident.

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.