iStock

What happens when the smart home starts thinking for itself?

It doesn't take much imagination to predict the future of the sentient smart home

It doesn't take much imagination to predict the future of the sentient smart home

With the creation of Alexa and Google Assistant routines, along with the tinkering of some IFTTT applets, today's smart home can already do a good job of appearing automated.

The house may not entirely think for itself, but (providing you have the budget and the inclination) you can have fans switch on at a certain temperature, window blinds go up and down as the sun moves across the sky, and the garden water itself in tune with a daily weather forecast.

Read More:

But to say such a smart home thinks for itself is like believing a parrot really understands what it is saying.

The truly sentient smart home doesn't yet exist, but that doesn't mean companies like Amazon and Google don't want (or expect) this ability to appear sometime in the future.

We are already part-way there. Amazon has Alexa Hunches, a system which has the Alexa make suggestions based on your usual behavior in the home.

For example, let's say you have a routine setup which turns down the heating when you say goodnight to Alexa. Now let's say you routinely switch off your kitchen smart lights after saying goodnight. If one day you say goodnight but don't switch the light off, Alexa will alert you to say you haven't switched the lights off as normal.

If you then say 'Yes', Alexa will go ahead and switch the light off for you.

This system isn't fully fleshed-out for now, but it gives some insight into what Amazon sees its users wanting from Alexa in the near future. Convenience is the name of the game here, but this functionality comes from artificial intelligence like Alexa taking a closer look at you and your daily routines.

A reminder to switch the light off at night is fine, but what if Alexa dials into your Amazon buying habits and starts suggesting aloud if you'd like it to re-buy certain personal items?

'You sound unwell, would you like me to order medicine?'

Amazon is starting to head down a path not dissimilar to this. A patent made public in October 2018 (but filed by Amazon in March 2017) details how the retailer is exploring ways to have Alexa notice when you sound unwell, then buy medicine on your behalf.

A drawing included in the patent shows a woman saying: "Alexa, I'm hungry" while coughing and sniffing. Alexa at first responds normally, suggesting a recipe for chicken soup. But when this is declined by the user with a "No, thanks," Alexa says: "Ok, I can find something else. By the way, would you like to order cough drops with one-hour delivery?"

The patent discusses how Alexa could understand the "physical and emotional characteristics of users." Noticing a cough while you ask Alexa for dinner inspiration is one thing, but artificial intelligence reading your emotions and attempting to understand them, then uploading these findings to the servers of a retail giant? This may not sit so comfortably with consumer who bought their Echo Dot to o little more than switch on a light bulb and set a timer for boiling eggs.

Tracking device maker Tile is also exploring pre-emptive technology, where its system can now alert you if it thinks you have left something behind. Say your phone and the Tile on your keys leave the location Tile knows to be your home, but the Tile inside your wallet does not; the app will check if you've forgotten to bring your wallet with you.

Systems like Darwin by Delos also work autonomously to make small changes around the home. Announced at the start of 2019, Darwin features adaptive lighting to match your circadian cycles, adjusting automatically to natural sunlight times through the year. There is also a water filtration system, the automatic removal of air pollutants, and continuous air quality monitoring which makes real-time adjustments to how the system works. Effectively, this is a smart home which works in the background to protect your respiratory health.

Alexa, read my mood

Combine this with an Alexa which can read your mood, and the lighting could be set just so when you get home from a stressful day at work, or when your friend called with some good news. Alexa doesn't need to understand the call, or spy on you at work, but could adjust the home subtly based on your expressions and tone of voice. A bad mood could also encourage Alexa to stay quiet, issuing a simple beep of acknowledgment instead of a chirpy response when you ask, with a sigh, for the lights to be dimmed.

Step outside, and automated irrigation systems like the Rachio 3 monitor hyperlocal weather forecasts to give your plants and lawn exactly the right amount of water each day, or skip a day if it's rained. Again, this is an entirely automated system which, for all intents and purposes, is thinking for itself without your input.

Google too is exploring ways for its assistant to work preemptively. In December 2018, Google wrote about how the Assistant will start proactively warning users about flight delays and cancellations, even before the airline itself has said there are any problems. This hunch comes from predictions made by Google, based on historic flight status data and machine learning. The Assistant will then suggest you plan ahead, but still get to the airport on time.

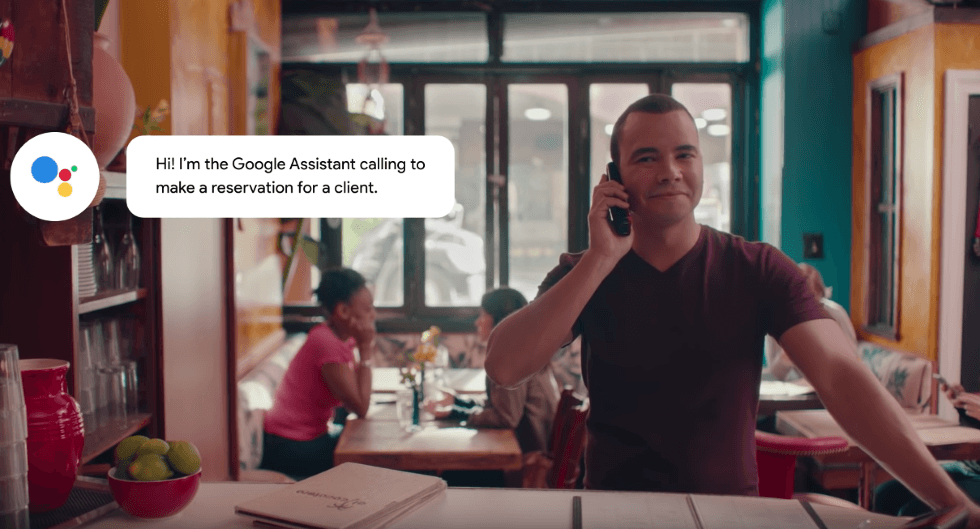

Google of course has Duplex, the Assistant system which can call up restaurants and make reservations on your behalf with a human-like voice. We can see Google wanting to bring this to the home, where it could be used to greet you each morning, reading out the day's news and weather forecast, or letting you know that someone rang your doorbell while you were out. Apps like Ring can already do this with a phone notification, but having Duplex operate this way, aloud, feels much closer to Tony Stark's JARVIS, yet without feeling far from what's possible today.

On a similar theme, how about a smart fridge which automatically orders groceries when yours run low? Knowing the weight of the milk carton and being logged into your Amazon or Whole Foods account would make this a breeze

Cameras everywhere

Weighing food and drinks may seem a little clunky, yet Amazon could extend a version of the camera systems used in its Amazon Go to consumers. In stores, the service automatically bill your account when you leave a shop after seeing exactly what items you've picked up and left with in your hand. Put this tech in the home and it could see what you filled your fridge and cupboards with, then what you put in the bin a few days later. Give it some time to learn your routine, and the algorithm could probably start ordering replenishments to arrive on the exact day items start to run out.

This could work with dog food too — if the indoor security camera doesn't see your aging dog for a few weeks, it could discreetly cancel that recurring Pedigree order you forgot about.

In a more upbeat thought, say a Roomba robotic vacuum has access to your Google calendar, then automatically cleans up a few hours before you have friends coming over. It could also suck up the crumbs an hour or so after they leave, because you always drop some chips on the carpet.

None of this feels too far from reality, as it takes preexisting data and uses it to control smart home devices already on sale. It's just a case of joining the dots and teaching an algorithm until it can be trusted to do the right thing without us even asking. The question is whether gatekeepers like Google will share our data freely enough — a concern which will see the shuttering of IFTTT's Nest smart home applets in August, due to user data being shared too freely beyond Google's control.

While some of this may sound intrusive, there's a potential upside to these abilities. How about an alarm system which automatically calls the police when an unknown face is spotted inside your home? Or one which makes an emergency call — complete with Google Duplex doing the speaking — when temperature sensors suspect a fire is causing a heat spike in the kitchen, but you're on a plane and unable to see the notification?

Navigating the uncanny valley

There is real potential here, especially as the so-called uncanny valley begins to play to the advantage of A.I. developers — the phenomenon where consumers become surprisingly at ease with human-like technologies like voice assistants the more accurate they become.

But it will not be a smooth journey. The valley name refers to a steep dip in the relationship between an A.I.'s realism and our affinity towards the technology. This affinity starts highly positive due to the A.I. not seemingly particularly human at all, but dips as its realism increases, before spiking to new, and surprising, highs when the A.I. gets closer to human realism.

The smart home and its artificial intelligence — Alexa, Siri and Google Assistant — is at the early stages of this curve, where human affinity and empathy towards the technology starts off high, but this will dip as the smart home gets more intelligent, before hopefully rising again as chatting with Google Duplex, for example, becomes the norm.

GearBrain Compatibility Find Engine

A pioneering recommendation platform where you can research,

discover, buy, and learn how to connect and optimize smart devices.

Join our community! Ask and answer questions about smart devices and save yours in My Gear.